- Cached

- Homebrew - How To Build Ffmpeg With Vo-amrwbenc Library ...

- Homebrew Ffmpeg.55.64bit.dylib

- Github Homebrew Ffmpeg

- Installing Ffmpeg Through Homebrew: Dyld Library Not ...

- Command Not Found Ffmpeg

- Helpful Terminal Commands, Homebrew, And Ffmpeg - Terminal Tinkering 7

Dec 30, 2013 Example: brew install ffmpeg -with-fdk-aac -with-tools. A couple quick notes. You might be asking “what’s the difference between Homebrew and MacPorts?” Well, they basically do the same thing. Homebrew is a little easier to use, MacPorts is a little more complicated but powerful (though many would argue the point). I need the FFmpeg options that were removed from Homebrew, so I took it upon myself to publish a tap. Perhaps with the assistance of the community (this means you!), we can improve the formula and keep it reasonably well-maintained as new versions of FFmpeg are released.

$ brew install ffmpeg -force $ brew link ffmpeg Now you should be good to go. Improve this answer. Follow edited Mar 22 '20 at 0:26. 125 5 5 bronze badges. Answered May 18 '16 at 4:25. Danijel-James W Danijel-James W. 5,508 7 7 gold badges 29. Example: brew install ffmpeg -with-fdk-aac -with-tools. A couple quick notes. You might be asking “what’s the difference between Homebrew and MacPorts?” Well, they basically do the same thing. Homebrew is a little easier to use, MacPorts is a little more complicated but powerful (though many would argue the point). This week, we go over some interesting and useful Terminal commands. We also look at the missing macOS package manager, Homebrew, and do some video conversio.

1. Install ffmpeg CLI through homebrew

In terminal.app, install ffmpeg through homebrew

Validate the installation:

Expect to see terminal returns the directory path of ffmpeg such as /usr/local/bin/ffmpeg

2. Set webp properties and convert

Example command which would convert an mp4 file to a lossless loop playing webp file in 20FPS with resolution 800px(width) * h600px(height):

Export an animated lossy WebP with preset mode picture:

primary options:

- set frame per second as 20:

-filter:v fps=fps=20 - set output file lossless:

-lossless 1 - set output webp file loop play:

-loop 0. For non-loop, use-loop 1 - set rendering mode

-preset default, can set aspicture,photo,text,icon,drawingandnoneas needed. It would effect output file size. http://ffmpeg.org/ffmpeg-all.html#Options-28 - set output webp resolution as w800px * h600px

-s 800:600

For more option details, please visit the the ffmpeg libwebp documentation

This method should applicable for majority video formats including .mov, .avi, .flv, etc. as input files as well as .gif format as output file.

Contents

- Installing FFmpeg

- Preliminary Command Examples

- Color Data Analysis

- Wrap Up

Introduction

The Digital Humanities, as a discipline, have historically focused almost exclusively on the analysis of textual sources through computational methods (Hockey, 2004). However, there is growing interest in the field around using computational methods for the analysis of audiovisual cultural heritage materials as indicated by the creation of the Alliance of Digital Humanities Organizations Special Interest Group: Audiovisual Materials in the Digital Humanities and the rise in submissions related to audiovisual topics at the global ADHO conference over the past few years. Newer investigations, such as Distant Viewing TV, also indicate a shift in the field toward projects concerned with using computational techniques to expand the scope of materials digital humanists can investigate. As Erik Champion states, “The DH audience is not always literature-focused or interested in traditional forms of literacy,” and applying digital methodologies to the study of audiovisual culture is an exciting and emerging facet of the discipline (Champion, 2017). There are many valuable, free, and open-source tools and resources available to those interested in working with audiovisual materials (for example, the Programming Historian tutorial Editing Audio with Audacity), and this tutorial will introduce another: FFmpeg.

FFmpeg is “the leading multimedia framework able to decode, encode, transcode, mux, demux, stream, filter, and play pretty much anything that humans and machines have created” (FFmpeg Website - “About”). Many common software applications and websites use FFmpeg to handle reading and writing audiovisual files, including VLC, Google Chrome, YouTube, and many more. In addition to being a software and web-developer tool, FFmpeg can be used at the command-line to perform many common, complex, and important tasks related to audiovisual file management, alteration, and analysis. These kinds of processes, such as editing, transcoding (re-encoding), or extracting metadata from files, usually require access to other software (such as a non-linear video editor like Adobe Premiere or Final Cut Pro), but FFmpeg allows a user to operate on audiovisual files directly without the use of third-party software or interfaces. As such, knowledge of the framework empowers users to manipulate audiovisual materials to meet their needs with a free, open-source solution that carries much of the functionality of expensive audio and video editing software. This tutorial will provide an introduction to reading and writing FFmpeg commands and walk through a use-case for how the framework can be used in Digital Humanities scholarship (specifically, how FFmpeg can be used to extract and analyze color data from an archival video source).

Learning Objectives

- Install FFmpeg on your computer or use a demo version in your web browser

- Understand the basic structure and syntax of FFmpeg commands

- Execute several useful commands such as:

- Re-wrapping (change file container) & Transcoding (re-encode files)

- Demuxing (separating audio and video tracks)

- Trimming/Editing files

- File playback with FFplay

- Creating vectorscopes for color data visualization

- Generating color data reports with FFprobe

- Introduce outside resources for further exploration and experimentation

Prerequisites

Before starting this tutorial, you should be comfortable with locating and using your computer’s Terminal or other command-line interface, as this is where you will be entering and executing FFmpeg commands. If you need instruction on how to access and work at the command-line, I recommend the Program Historian’s Bash tutorial for Mac and Linux users or the Windows PowerShell tutorial. Additionally, a basic understanding of audiovisual codecs and containers will also be useful to understanding what FFmpeg does and how it works. We will provide some additional information and discuss codecs and containers in a bit more detail in the Preliminary Command Examples section of this tutorial.

Installing FFmpeg can be the most difficult part of using FFmpeg. Thankfully, there are some helpful guides and resources available for installing the framework based on your operating system.

For Mac OS Users

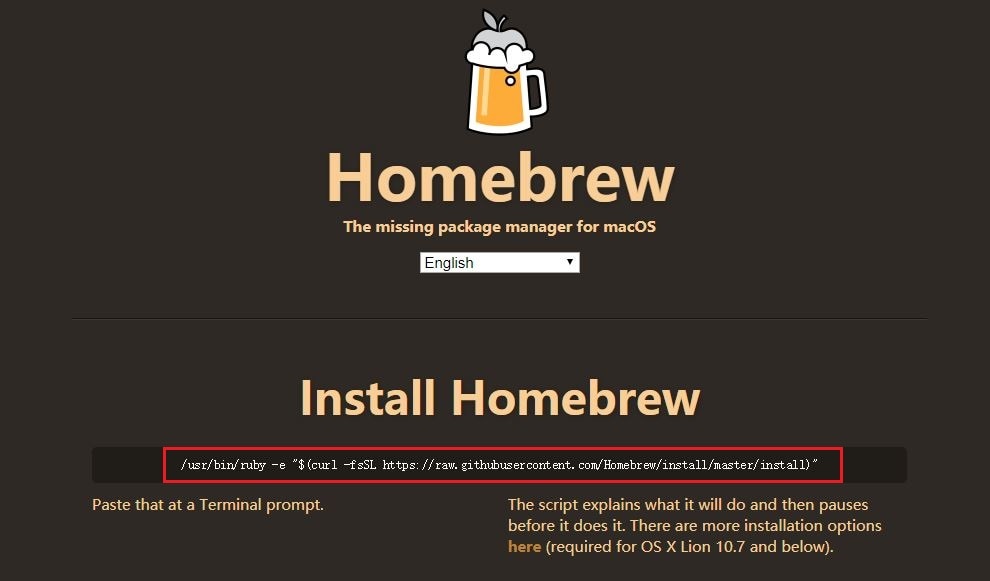

The simplest option is to use a package manager such as Homebrewto install FFmpeg and ensure it remains in the most up-to-date version. Homebrew is also useful in ensuring that your computer has the necessary dependencies installed to ensure FFMpeg runs properly. To complete this kind of installation, follow these steps:

- Install Homebrew following the instructions in the above link

- You can then run

brew install ffmpegin your Terminal to initiate a basic installation.- Note: Generally, it is recommended to install FFMpeg with additional features than what is included in the basic installation. Including additional options will provide access to more of FFmpeg’s tools and functionalities. Reto Kromer’s Apple installation guide provides a good set of additional options:

- For an explanation of these additional options, refer to Ashley Blewer’s FFmpeg guide

- Additionally, you can run

brew options ffmpegto see what features are or have become available with the current FFmpeg release

After installing, it is best practice to update Homebrew and FFmpeg to ensure all dependencies and features are most up-to-date by running:

- For more installation options for Mac OS, see the Mac OS FFmpeg Compilation Guide

For Windows Users

Windows users can use the package manager Chocolately to install and maintain FFmpeg. Reto Kromer’s Windows installation guide provides all the necessary information to use Chocolately or to install the software from a build.

For Linux Users

Linuxbrew, a program similar to Homebrew, can be used toinstall and maintain FFmpeg in Linux. Reto Kromer also provides a helpful Linux installation guidethat closely resembles the Mac OS installation. Your distribution of Linux may also have its own package manager already installed that include FFmpeg packages available. Depending on your distribution of Linux (Ubuntu, Fedora, Arch Linux, etc.) these builds can vary, so using Linuxbrew could be useful to ensure that the build is the same regardless of which type of Linux you are using.

Other Installation Resources

- Download Packages

- FFmpeg allows access to binary files, source code, and static builds for Mac, Windows, and Linux directly through its website, enabling users to build the framework without a package manager. It is likely that only advanced users will want to follow this option.

- FFmpeg Compilation Guide

- The FFmpeg Wiki page also provides a compendium of guides and strategies for building FFmpeg on your computer.

Testing the Installation

To ensure FFmpeg is installed properly, run:

If you see a long output of information, the installation was successful! It should look similar to this:

If you see something like

-bash: ffmpeg: command not foundthen something hasgone wrong.- Note: If you are using a package manager it is unlikely that you will encounter this error message. However, if there is a problem after installing with a package manager, it is likely the issue is with the package manager itself as opposed to FFmpeg. Consult the Troubleshooting sections for Homebrew, Chocolatey, or Linuxbrew to ensure the package manager is functioning properly on your computer. If you are attempting to install without a package manager and see this error message, cross-reference your method with the FFmpeg Compilation Guide provided above.

Using FFmpeg in a web browser (without installing)

If you do not want to install FFmpeg on your computer but would like to become familiar with using it at the command-line, Brian Grinstead’s videoconverter.js provides a way to run FFmpeg commands and learn its basic functions in the web-browser of your choice.

Cached

Basic FFmepg commands consist of four elements:

- A command prompt will begin every FFmpeg command. Depending on the use, this prompt will either be

ffmpeg(changing files),ffprobe(gathering metadata from files), orffplay(playback of files). - Input files are the files being read, edited, or examined.

- Flags and actions are the things you are telling FFmpeg to do the input files. Most commands will contain multiple flags and actions of various complexity.

- The output file is the new file created by the command or the report generated by an

ffprobecommand.

Written generically, a basic FFmpeg command looks like this:

Next, we will look at some examples of several different commands that use this structure and syntax. These commands will also demonstrate some of FFmpeg’s most basic, useful functions and allow us to become more familiar with how digital audiovisual files are constructed.

For this tutorial, we will be taking an archival film called Destination Earth as our object of study. This film has been made available by the Prelinger Archives collection on the Internet Archive. Released in 1956, this film is a prime example of Cold War-era propaganda produced by the American Petroleum Institute and John Sutherland Productions that extols the virtues of capitalism and the American way of life. Utilizing the Technicolor process, this science-fiction animated short tells a story of a Martian society living under an oppressive government and their efforts to improve their industrial methods. They send an emissary to Earth who discovers the key to this is oil refining and free-enterprise. We will be using this video to introduce some of the basic functionalities of FFmpeg and analyzing its color properties in relation to its propagandist rhetoric.

For this tutorial, you will need to:

- Navigate to the Destination Earth page on IA

- Download two video files: the “MPEG4” (file extension

.m4v) and “OGG” (file extension.ogv) versions of the film - Save these two video files in the same folder somewhere on your computer. Save them with the file names

destEarthfollowed by its extension

Take a few minutes to watch the video and get a sense of its structure, message, and visual motifs before moving on with the next commands.

Viewing Basic Metadata with FFprobe

Before we begin manipulating our destEarth files, let’s use FFmpeg to examine some basic information about the file itself using a simple ffprobe command. This will help illuminate how digital audiovisual files are constructed and provide a foundation for the rest of the tutorial. Navigate to the file’s directory and execute:

You will see the file’s basic technical metadata printed in the stdout:

The Input #0 line of the reports identifies the container as ogg. Containers (also called “wrappers”) provide the file with structure for its various streams. Different containers (other common ones include .mkv, .avi, and .flv) have different features and compatibilities with various software. We will examine how and why you might want to change a file’s container in the next command.

The lines Stream #0:0 and Stream #0:1 provide information about the file’s streams (i.e. the content you see on screen and hear through your speakers) and identify the codec of each stream as well. Codecs specify how information is encoded/compressed (written and stored) and decoded/decompressed (played back). Our .ogv file’s video stream (Stream #0:0) uses the theora codec while the audio stream (Stream #0:1) uses the vorbis codec. These lines also provide important information related to the video stream’s colorspace (yuv420p), resolution (400x300), and frame-rate (29.97 fps), in addition to audio information such as sample-rate (44100 Hz) and bit-rate (128 kb/s).

Codecs, to a much greater extent than containers, determine an audiovisual file’s quality and compatibility with different software and platforms (other common codecs include DNxHD and ProRes for video and mp3 and FLAC for audio). We will examine how and why you might want to change a file’s codec in the next command as well.

Run another ffprobe command, this time with the .m4v file:

Again you’ll see the basic technical metadata printed to the stdout:

You’ll also notice that the report for the .m4v file contains multiple containers on the Input #0 line like mov and m4a. It isn’t necessary to get too far into the details for the purposes of this tutorial, but be aware that the mp4 and mov containers come in many “flavors” and different file extensions. However, they are all very similar in their technical construction, and as such you may see them grouped together in technical metadata. Similarly, the ogg file has the extension .ogv, a “flavor” or variant of the ogg format.

Just as in our previous command, the lines Stream #0:0 and Stream #0:1 identify the codec of each stream. We can see our .m4v file uses the H.264 video codec while the audio stream uses the aac codec. Notice that we are given similar metadata to our .ogv file but some important features related to visual analysis (such as the resolution) are significantly different. Our .m4v has a much higher resolution (640x480) and we will therefore use this version of Destination Earth as our source video.

Now that we know more about the technical make-up of our file, we can begin exploring the transformative features and functionalities of FFmpeg (we will use ffprobe again later in the tutorial to conduct more advanced color metadata extraction).

Changing Containers and Codecs (Re-Wrap and Transcode)

Depending on your operating system, you may have one or more media players installed. For the purposes of demonstration, let’s see what happens if you try to open destEarth.ogv using the QuickTime media player that comes with Mac OSX:

One option when faced with such a message is to simply use another media player. VLC, which is built with FFmpeg, is an excellent open-source alternative, but simply “using another software” may not always be a viable solution (and you may not always have another version of a file to work with, either). Many popular video editors such as Adobe Premiere, Final Cut Pro, and DaVinci Resolve all have their own limitations on the kinds of formats they are compatible with. Further, different web-platforms and hosting/streaming sites such as Vimeo have their own requirements as well. As such, it is important to be able to re-wrap and transcode your files to meet the various specifications for playback, editing, digital publication, and conforming files to standards required by digital preservation or archiving platforms.

ffmpeg -codecs and ffmpeg -formats, respectively, to see the list printed to your stdout.As an exercise in learning basic FFmpeg syntax and learning how to transcode between formats, we will begin with our destEarth.ogv file and write a new file with video encoded to H.264, audio to AAC, and wrapped in an .mp4 container, a very common and highly-portable combination of codecs and container that is practically identical to the .m4v file we originally downloaded. Here is the command you will execute along with an explanation of each part of the syntax:

ffmpeg= starts the command-i destEarth.ogv= specifies the input file-c:v libx264= transcodes the video stream to the H.264 codec-c:a aac= transcodes the audio stream to the AAC codecdestEarth_transcoded.mp4= specifies the output file. Note this is where the new container type is specified.

If you execute this command as it is written and in the same directory as destEarth.ogv, you will see a new file called destEarth_transcoded.mp4 appear in the directory. If you are operating in Mac OSX, you will also be able to play this new file with QuickTime. A full exploration of codecs, containers, compatibility, and file extension conventions is beyond the scope of this tutorial, however this preliminary set of examples should give those less familiar with how digital audiovisual files are constructed a baseline set of knowledge that will enable them to complete the rest of the tutorial.

Creating Excerpts & Demuxing Audio & Video

Homebrew - How To Build Ffmpeg With Vo-amrwbenc Library ...

Now that we have a better understanding of streams, codecs, and containers, let’s look at ways FFmpeg can help us work with video materials at a more granular level. For this tutorial, we will examine two discrete sections of Destination Earth to compare how color is used in relation to the film’s propagandist rhetoric. We will create and prepare these excerpts for analysis using a command that performs two different functions simultaneously:

- First, the command will create two excerpts from

destEarth.m4v. - Second, the command will remove (“demux”) the audio components (

Stream #0:1) from these excerpts.We are removing the audio in the interest of promoting good practice in saving storage space (the audio information is not necessary for color analysis). This will likely be useful if you hope to use this kind of analysis at larger scales. More on scaling color analysis will be provided near the end of the tutorial.

The first excerpt we will be making is a sequence near the beginning of the film depicting the difficult conditions and downtrodden life of the Martian society. The following command specifies start and end points of the excerpt, tells FFmpeg to retain all information in the video stream without transcoding anything, and to write our new file without the audio stream:

ffmpeg= starts the command-i destEarth.m4v= specifies the input file-ss 00:01:00= sets start point at 1 minute from start of file-to 00:04:45= sets end point to 4 minutes and 45 seconds from start of file-c:v copy= copy the video stream directly, without transcoding-an= tells FFmpeg to ignore audio stream when writing the output file.destEarth_Mars_video.mp4= specifies the output file

Homebrew Ffmpeg.55.64bit.dylib

We will now run a similar command to create an “Earth” excerpt. This portion of the film has a similar sequence depicting the wonders of life on Earth and the richness of its society thanks to free-enterprise capitalism and the use of oil and petroleum products:

You should now have two new files in your directory called destEarth_Mars_video.mp4 and destEarth_Earth_video.mp4. You can test one or both files (or any of the other files in the directory) using the ffplay feature of FFmpeg as well. Simply run:

and/or

You will see a window open and the video will begin at the specified start point, play through once, and then close (in addition, you’ll notice there is no sound in your video). You will also notice that ffplay commands do not require an -i or an output to be specified because the playback itself is the output.

FFplay is a very versatile media player that comes with a number of options for customizing playback. For example, adding `-loop 0` to the command will loop playback indefinitely.We have now created our two excerpts for analysis. If we watch these clips discretely, there appear to be significant, meaningful differences in the way color and color variety are used. In the next part of the tutorial, we will examine and extract data from the video files to quantify and support this hypothesis.

Color Data Analysis

The use of digital tools to analyze color information in motion pictures is another emerging facet of DH scholarship that overlaps with traditional film studies. The FilmColors project, in particular, at the University of Zurich, interrogates the critical intersection of film’s “formal aesthetic features to [the] semantic, historical, and technological aspects” of its production, reception, and dissemination through the use of digital analysis and annotation tools (Flueckiger, 2017). Although there is no standardized method for this kind of investigation at the time of this writing, the ffprobe command offered below is a powerful tool for extracting information related to color that can be used in computational analysis. First, let’s look at another standardized way of representing color information that informs this quantitative, = ends the filter-graph

And for the “Earth” excerpt:

We can also adjust this command to write new video files with vectorscopes as well:

Note the slight but important changes in syntax:

- We have added an

-iflag because it is anffmpegcommand. - We have specified the output video codec as H.264 with the flag

-c:v libx264and have left out an option for audio. Although you could add-c:a copyto copy the audio stream (if there is one in the input file) without transcoding or specify another audio codec here if necessary. - We have specified the name of the output file.

Take a few minutes to watch these videos with the vectorscopes embedded in them. Notice how dynamic (or not) the changes are between the “Mars” and “Earth” excerpts. Compare what you see in the vectorscope to your own impressions of the video itself. We might use observations from these vectorscopes to make determinations about which shades of color appear more regularly or intensely in a given source video, or we may compare different formats side-by-side to see how color gets encoded or represented differently based on different codecs, resolutions, etc.

Although vectorscopes provide a useful, real-time representation of color information, we may want to also access the raw data beneath them. We can then use this data to develop more flexible visualizations that are not dependent on viewing the video file simultaneously and that offer a more quantitative approach to color analysis. In our next commands, we will use ffprobe to produce a tabular dataset that can be used to create a graph of color data.

Github Homebrew Ffmpeg

Color Data Extraction with FFprobe

At the beginning of this tutorial, we used an ffprobe command to view our file’s basic metadata printed to the stdout. In these next examples, we’ll use ffprobe to extract color data from our video excerpts and output this information to .csv files. Within our ffprobe command, we are going to use the signalstats filter to create .csv reports of median color hue information for each frame in the video stream of destEarth_Mars_video.mp4 and destEarth_Earth_video.mp4, respectively.

ffprobe= starts the command-f lavfi= specifies the libavfilter virtual input device as the chosen format. This is necessary when usingsignalstatsand many filters in more complex FFmpeg commands.-i movie=destEarth_Mars_video.mp4= name of input file,signalstats= specifies use of thesignalstatsfilter with the input file-show_entries= sets list of entries that will be shown in the report. These are specified by the next options.frame=pkt_pts_time= specifies showing each frame with its correspondingpkt_pts_time, creating a unique entry for each frame of video:frame_tags=lavfi.signalstats.HUEMED= creates a tag for each frame that contains the median hue value-print_format csv= specifies the format of the metadata report> destEarth_Mars_hue.csv= writes a new.csvfile containing the metadata report using>, a Bash redirection operator. Simply, this operator takes the command the precedes it and “redirects” the output to another location. In this instance, it is writing the output to a new.csvfile. The file extension provided here should also match the format specified by theprint_formatflag

Next, run the same command for the “Earth” excerpt:

signalstats filter and the various metrics that can be extracted from video streams, refer to the FFmpeg's Filters Documentation.You should now have two .csv files in your directory. If you open these in a text editor or spreadsheet program, you will see three columns of data:

Going from left to right, the first two columns give us information about where we are in the video. The decimal numbers represent fractions of a second that also roughly correspond to the video’s time-base of 30fps. As such, each row in our .csv corresponds to one frame of video. The third column carries a whole number between 0-360, and this value represents the median hue for that frame of video. These numbers are the underlying quantitative data of the vectorscope’s plot and correspond to its position (in radians) on the circular graticle. Referencing our vectorscope image from earlier, you can see that starting at the bottom of the circle (0 degrees) and moving left, “greens” are around 38 degrees, “yellows” at 99 degrees, “reds” at 161 degrees, “magentas” at 218 degrees, “blues” at 279 degrees, and “cyans” at 341 degrees. Once you understand these “ranges” of hue, you can get an idea of what the median hue value for a given video frame is just by looking at this numerical value.

Additionally, It is worth noting that this value extracted by the signalstats filter is not an absolute or complete measure of an image’s color qualities, but simply a meaningful point of reference from which we can explore a,signalstats -show_entries frame=pkt_pts_time:frame_tags=lavfi.signalstats.HUEMED -print_format csv > '${file%.m4v}.csv'; done = the same color metadata extraction command we ran on our two excerpts of Destination Earth, with some slight alterations to the syntax to account for its use across multiple files in a directory:

'$file'recalls each variable. The enclosing quotation marks ensures that the original filename is retained.> '${file%.m4v}.csv';retains the original filename when writing the output.csvfiles. This will ensure the names of the original video files will match their corresponding.csvreports.done= terminates the script once all files in the directory have been looped

signalstats to pull other valuable information related to color. Refer to the filter's documentation for a complete list of visual metrics available.Once you run this script, you will see each video file in the directory now has a corresponding .csv file containing the specified dataset.

In this tutorial, we have learned:

- To install FFmpeg on different operating systems and how to access the framework in the web-browser

- The basic syntax and structure of FFmpeg commands

- To view basic technical metadata of an audiovisual file

- To transform an audiovisual file through transcoding and re-wrapping

- To parse and edit that audiovisual file by demuxing it and creating excerpts

- To playback audiovisual files using

ffplay - To create new video files with embedded vectorscopes

- To export tabular data related to color from a video stream using

ffprobe - To craft a Bash for loop to extract color data information from multiple video files with one command

At a broader level, this tutorial aspires to provide an informed and enticing introduction to how audiovisual tools and methodologies can be incorporated in Digital Humanities projects and practices. With open and powerful tools like FFmpeg, there is vast potential for expanding the scope of the field to include more rich and complex types of media and analysis than ever before.

Further Resources

Installing Ffmpeg Through Homebrew: Dyld Library Not ...

FFmpeg has a large and well-supported community of users across the globe. As such, there are many open-source and free resources for discovering new commands and techniques for working with audio-visual media. Please contact the author with any additions to this list, especially educational resources in Spanish for learning FFmpeg.

- The Official FFmpeg Documentation

- ffmprovisr from the Association of Moving Image Archivists

- Ashley Blewer’s Audiovisual Preservation Training

- Andrew Weaver’s Demystifying FFmpeg

- Ben Turkus’ FFmpeg Presentation

- Reto Kromer’s FFmpeg Cookbook for Archivists

Command Not Found Ffmpeg

Helpful Terminal Commands, Homebrew, And Ffmpeg - Terminal Tinkering 7

Open-Source AV Analysis Tools using FFmpeg

Champion, E. (2017) “Digital Humanities is text heavy, visualization light, and simulation poor,” Digital Scholarship in the Humanities 32(S1), i25-i32.

Flueckiger, B. (2017). “A Digital Humanities Approach to Film Colors”. The Moving Image, 17(2), 71-94.

Hockey, S. (2004) “The History of Humanities Computing,” A Companion to Digital Humanities, ed. Susan Schreibman, Ray Siemens, John Unsworth. Oxford: Blackwell.